.

Are you struggling to get pages indexed, even when your SEO is otherwise sound? If so, you’re not alone. Content creators across the world are complaining of indexing issues – many of whom previously had no problem getting their pages listed in search. So what’s happening? And more, importantly, how can you solve it?

The problem

SEOs and content writers first started noticing indexing issues in the final quarter of 2021. Pages that previously would have been crawled and listed in days or weeks were suddenly going unnoticed, often for months on end. Other search engines were not having the same issues. Organic traffic started to take a hit.

Even using the ‘Request Indexing’ feature in Search Console was having inconsistent results. In the past, the button was seen by many as a ‘cheat code’, prompting Google to crawl and index a page often in a matter of minutes. However, this was no longer having the same effect, and new posts were remaining in the dark.

On the 13th December 2021, Google acknowledged that they were experiencing an internal issue with false redirects during indexing. This was a Google error and nothing to do with individual websites, but the problem was felt by businesses who could no longer rely on organic visibility for new posts.

An internal issue is causing an increase of redirect errors during indexing, and associated email notifications. This is not due to any website issues, but is due to an internal Google issue. We hope to fix this problem quickly.

— Google Search Central (@googlesearchc) December 13, 2021

The issue was resolved on 21st December 2021, with Google thanking users for their patience. However, while the glitch was indeed fixed, the repercussions were a long way from being resolved. The bug has caused a huge indexing backlog which continues to be an issue as of February 2022. So with businesses across the world still struggling to get their content listed in Google, what can SEOs do to get ahead of the competition?

Identify your specific issue

For the purposes of this guide, I will assume that your new page is already optimised with solid SEO principles and that it is available to be indexed into the Google ecosystem. It goes without saying that if your content does not follow Google’s guidelines, then getting it indexed will be an uphill battle.

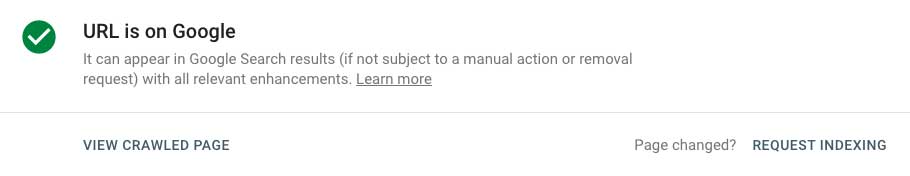

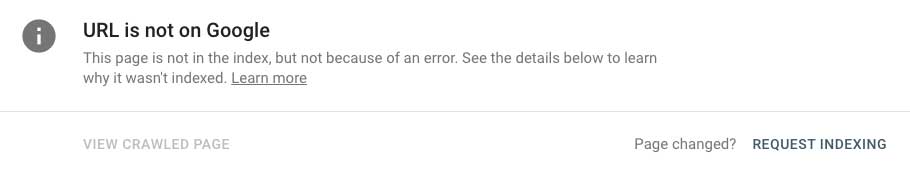

Once your post is published and available to Google, head over to Google Search Console and use the ‘Request Indexing’ feature via the URL Inspection tool. You will need to wait a day or two, but the result of this will be one of three possible outcomes.

The best case scenario is that the page will be fully indexed without issue. Happy days. If that doesn’t happen, you will need to take notice of the specific message under the Coverage section on the URL inspection page.

Discovered – currently not indexed

If the message on the page reads ‘Discovered – currently not indexed’, it means GoogleBot knows that the page exists, but has not yet crawled the site. There are a number of reasons why this might be the case.

The primary cause for this error message is that Google has determined that crawling your site would overload the server (whether or not it actually would have is another matter). There are some steps SEOs can take in order to fix the problem.

It is of course possible that there is indeed a Crawl Budget issue, particularly if you are running a website of around a million pages or more, such as large eCommerce websites. A full guide for this is outside the scope of this article. In a nutshell, you will need to execute a technical audit of your site and manipulate GoogleBot to ignore low value pages in order to prioritise crawling of your money pages.

If you are running a smaller website, Crawl Budget is unlikely to be a real concern. It is possible that Google’s algorithm has assigned your site with a crawl rate that is lower than it could handle. In these cases it is usually possible to increase the crawl rate within Google Search Console. Once you manually increase the rate, the next time GoogleBot visits your site, it should go ahead and crawl it properly.

This is an advanced tool and should be used with caution – Google sets its limits for a reason because it is certainly possible that crawling your site could overwhelm your server’s available bandwidth. Bad news.

Crawled – currently not indexed

If the message reads ‘Crawled – currently not indexed’, it means GoogleBot has physically crawled your website, but has actively decided not to index it. Unfortunately for us SEOs, the reason is never disclosed, meaning it’s time for us to do some detective work.

It’s possible that your page is simply not up to Google’s standards for indexation. Low value, poorly written or certain types of duplicate content will struggle to be indexed simply because it is unlikely to be useful for searchers. In this case, you need to go back to the drawing board or get some help with your content writing. Examples of pages that would come under this category are those with very little content (words), or pages that are designed to manipulate search results rather than provide useful information.

Let’s assume that’s not the case. You’ve created a well constructed, technically sound and useful piece of content which Google has nonetheless decided not to index. If this is the case (and until Google fully clears the indexing backlog it’s entirely possible) you need to either be patient, or if you’re brave, take further action.

I’m brave! What can I do?

If your content is not being indexed, despite meeting all the criteria for being ‘indexable’, it is possible to use a Google API to force the issue. The Indexing API can be used to direct Google to crawl pages faster than they would have naturally been scheduled. It is designed to notify Google of content that is short-lived and new, which would likely be out of date by the time it is naturally crawled. The examples in the documentation are job listings and broadcast events (live streaming) which obviously have a short window for relevancy.

The API definitely works on content which doesn’t meet these criteria and is currently the fastest way to get new pages indexed. But the possibility of abuse leads to the possibility of future penalties. Can SEOs currently use this tool to their advantage? Certainly. Should they? That is less clear. At the end of the day, creating quality content and following established technical best practices will always be the safest way to work.